The Planet Inside a Computer

How Los Alamos scientists turned Cold War supercomputers into engines of planetary insight

- Kyle Dickman, Science Writer

In the fall of 1983, a thin plume of simulated smoke appeared inside a Los Alamos supercomputer. The smoke—nothing more than computer code on a cathode-ray display—wafted across continents, dimming them in gray. The image was digital and silent, but to the physicists watching, it felt apocalyptic. That was the point.

Earlier that year, astronomer and science communicator Carl Sagan had warned that a global nuclear war could ignite cities and forests, lofting soot high into the atmosphere, blocking sunlight, and triggering what he called a “nuclear winter.” Agriculture would collapse; entire ecosystems could fail. The idea triggered well-justified alarm. But was it true? At the Lab, physicist Bob Malone, hired years before to build an Earth system modeling program, was tasked with testing the theory.

Malone began building one of the first three-dimensional atmospheric models ever used for Earth systems research—a vast numerical experiment that simulated how smoke, heat, and radiation would circulate around the planet. “It was completely unprecedented,” says Elizabeth Hunke, an applied mathematician and Lab Fellow who later worked with Malone’s team. It was also prescient.

“What began as an exercise in national security evolved into one of the world’s most sophisticated efforts to understand the climate as a coupled, dynamic system.”

The sky would darken, yes—but according to the model, the world would not freeze. Having answered the question, Malone came to understand that the same methods used to model nuclear smoke could also model Earth’s climate, not just the planet’s destruction. By the time Malone’s group finished its simulations in 1985, a new computing revolution was underway with Los Alamos at its center. The Department of Energy (DOE) had launched the CHAMMP program, Computer Hardware, Advanced Mathematics, and Model Physics, to bring Earth systems modeling into the age of massively parallel supercomputers. What began as an exercise in national security evolved into one of the world’s most sophisticated efforts to understand the climate as a coupled, dynamic system. That understanding would help answer essential national security questions.

Earth system modeling is necessarily a distributed field. Modeling Earth’s web of interconnected systems requires scientific expertise as diverse as the systems themselves. A single global model depends on hundreds of different scientists and software engineers, often located at institutions around the world. But it also requires computers powerful enough to weave the myriad systems together into one model. Los Alamos, one of the world’s high performance computing centers, offered exactly this advantage. “The Lab’s access to high performance computing accelerated the entire field of Earth system science,” Hunke says.

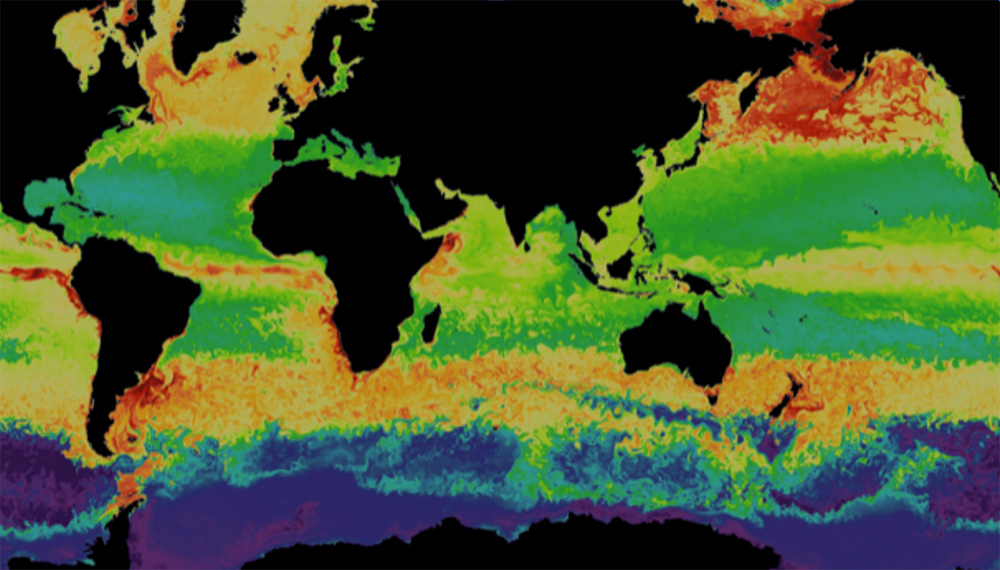

With the nuclear-winter question largely settled, Malone and his team turned Los Alamos’s computing power to one of Earth system science’s biggest unknowns—the ocean. In the 1990s, atmospheric modeling was already dominated by experts at the National Center for Atmospheric Research and the Geophysical Fluid Dynamics Laboratory, but few teams were focused on the role the oceans play in dictating climate.

“Fluid dynamics was our language,” Malone says years later. The same physics describing the turbulence created by explosions could be used to describe ocean currents and eddies. Malone’s small team soon developed the Parallel Ocean Program, or POP—the first ocean model designed for distributed-memory computers. Its innovations—local boundary conditions, a realistic moving sea surface, and a new surface-pressure formulation—made it both faster and more accurate than anything before it.

As the world’s Earth system modelers dug deeper into the field, they found more questions than answers. Individual models that represented single systems—the ocean, the atmosphere, ice sheets, land, forests, rivers—improved when they worked together. “Earth behaves as a complex adaptive system. Understanding how individual components are coupled together is key to predictions of the system as a whole,” says Andrew Roberts, a Los Alamos–modeler who works on today’s Energy Exascale Earth System Model, a state-of-the-science DOE model that uses AI to make fast and accurate global and regional predictions. But back when Earth system models were in the earliest stages of development, one glaring omission was sea ice.

“You can’t accurately simulate the Earth system, let alone the oceans, without it,” says Hunke. Sea ice does more than freeze, melt, and drift around the poles. It caps the polar oceans, reflecting sunlight and regulating the planet’s temperature gradient, which drives near- and long-term weather patterns across the globe. But modeling the physics of sea ice was so complex and computationally expensive that the era’s best models represented it like molasses—slow, stiff, viscous, and prone to crashing computers because the calculations needed to represent it were so demanding.

“You get much more of the Earth system when you have all the components talking to each other, through feedbacks.”

Hunke arrived at Los Alamos in 1994 as a postdoc and teamed up with mathematician John Dukowicz with the goal of making an accurate sea-ice model that could run within a full Earth system simulation—without crashing it. “What we did at Los Alamos,” Hunke says, “was figure out how to make a physics-based model of sea ice efficient enough that it could run on supercomputers of the day.”

She and Dukowicz’s new code captured the physics of how real ice freezes, melts, fractures, jams, and drifts and is so accurate that thirty years later, the model is still used to forecast the state of our polar oceans by countries around the world—from the U.S. to Norway, Australia, and South Korea.

In the decades that followed, Los Alamos widened its reach. The Lab built the first physics-based wildfire model and extended its ice simulations to the Greenland and Antarctic ice sheets. “Those are critical components for understanding national security questions,” says Matt Hoffman, who leads a project to refine how Earth system models represent the Antarctic.

To gather the data that feeds these models, scientists took to the field. They measured aerosols and trace gases, tracked drought-killed forests, and folded those data back into models to improve global and regional predictions. By the 2010s, the Lab’s focus had stretched from Arctic tundra to tropical forests, from power grids to methane leaks. “Now we’re adding full biogeochemistry to our models—everything from aerosols and atmospheric chemistry to plankton and algae,” says Elizabeth Hunke.

Today, each new model generation gets closer to both Earth’s mirror and crystal ball, reflecting how the planet works and hinting at what it might yet become. “But there is still so much more to do to get there,” says Roberts.

People also ask:

- Can computers really model the entire Earth? Not perfectly—but remarkably well. Scientists use Earth system models, vast mathematical frameworks that simulate how air, oceans, ice, and land interact to shape the planet’s climate. These digital Earths help predict everything from hurricane paths to long-term sea-level rise. At Los Alamos National Laboratory, physicists first adapted Cold War supercomputers to study “nuclear winter,” then used the same techniques to model Earth’s atmosphere, oceans, and ice as a coupled system.

- How are Earth’s systems connected? Earth’s systems—atmosphere, oceans, land, and ice—constantly exchange energy and matter. A change in one ripples through the rest. Modeling these connections requires immense computing power and physics expertise, both of which Los Alamos brings to the field.