The Science of Unpredictability

A Nobel laureate, a brilliant programmer, and two unexpected discoveries—the rise of nonlinear dynamics at Los Alamos.

- Kyle Dickman, Science Writer

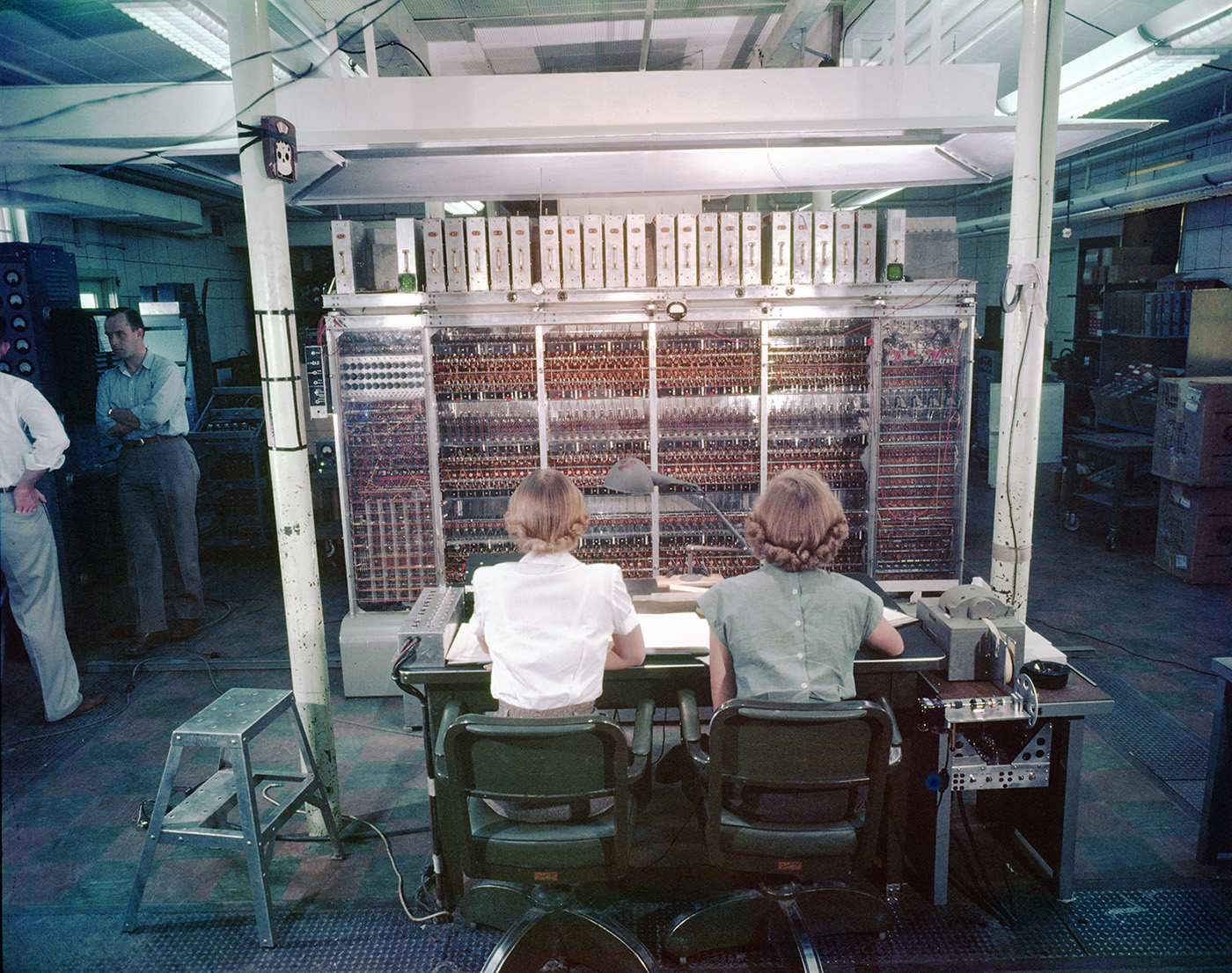

In 1955, 26-year-old mathematician and programmer Mary Tsingou sat in a cool, windowless room in a Los Alamos technical area before a wall of droning electronics: MANIAC. One of the world’s first scientific computers, MANIAC hummed, blinked, and hammered out rows of numbers on a mechanical printer. Tsingou had written code for a first-of-its-kind numerical experiment—now known as the Fermi–Pasta–Ulam–Tsingou, or FPUT problem. Conceived years earlier by Enrico Fermi, John Pasta, and Stanislaw Ulam, the experiment would uncover a paradox that would reshape how scientists think about systems as varied as the atmosphere, fusion plasmas, the economy, and even the rhythms of the human heart.

“Fermi passed away in 1954 and never saw the full paradox his idea would uncover,” says Avadh Saxena, a physicist and Lab Fellow who studies nonlinear phenomena at Los Alamos. “But the results were a watershed moment. They showed that nonlinear systems behave in surprisingly stable and structured ways, even when intuition says they should fall apart.”

The simulation Tsingou coded and ran over a few years was a one-dimensional line of masses connected by springs, but Fermi added a small nonlinear change to the spring force. Simple, yet it captured the nonlinear interactions of atoms, a system too small for experiments to observe directly. Fermi had designed the model to probe a fundamental assumption among physicists at the time—that even if a system wasn’t perfectly linear, energy should still spread out and thermalize much the same way it does in linear systems. The thinking went that small nonlinearities wouldn’t change how energy flows. To test that assumption, Fermi introduced a small nonlinear term into the springs’ restoring force. By hand, Tsingou wrote out the code and algorithm to simulate the problem on MANIAC.

“We made flowcharts,” recalled Tsingou in later interviews. She is now in her nineties and still lives in Los Alamos. “Because when you’re debugging a problem, you want to know where you are so you can stop at different places and look at things. Like any project, you have some idea, but as you go along, you have to make adjustments and corrections, or you have to back up and try a different approach.”

Running the simulation unfolded over years. But on that winter day, as Tsingou watched the machine clacking out the results, she saw that the energy initially spread into other modes—just as Fermi and his coauthors had expected. But then something extraordinary happened. Energy flowed back, almost perfectly, into the mode where it had started. It was as if the system remembered its initial state and returned to it. Instead of drifting inexorably toward equilibrium, Tsingou and her collaborators had demonstrated—numerically, and for the first time—that the natural world does not always behave according to the tidy expectations of linear dynamics. Nature is messy.

To appreciate the magnitude of this result, a refresher on what “linear” means is a good place to start. In a linear system, causes and effects scale proportionally: double the force, double the response. Such systems are orderly, predictable, and mathematically tractable. Our engineered world depends on linear relationships that explain how things like arches, bridges, and walls hold firm. But much of nature doesn’t play by these rules. In certain natural systems, energy not only fails to disperse evenly; it can be trapped, amplified, or assembled into coherent structures that arise out of apparent disorder.

One shining example of how discoveries unearthed by FPUT molded the modern world is solitons, stable packets of energy that travel without dispersing. Understanding solitons proved essential to the development of long-distance optical fiber communications, which depend on pulses of light that carry information across continents. Put more simply: it was essential for creating the internet.

Beyond eventually tangible technologies, the FPUT experiment revealed the power of simulation as a new kind of scientific tool, one capable of uncovering physical behavior that neither hand calculation nor experiments could reach. But it also forced science to reckon with a world where prediction, stability, and control look very different than what linear dynamics proposed. “Once you move beyond the simplest interactions, everything becomes emergent, coupled, and sensitive,” Saxena says. “Tiny nudges can have enormous consequences.”

“Chaos, in the scientific sense, isn’t randomness or disorder. It is deterministic unpredictability.”

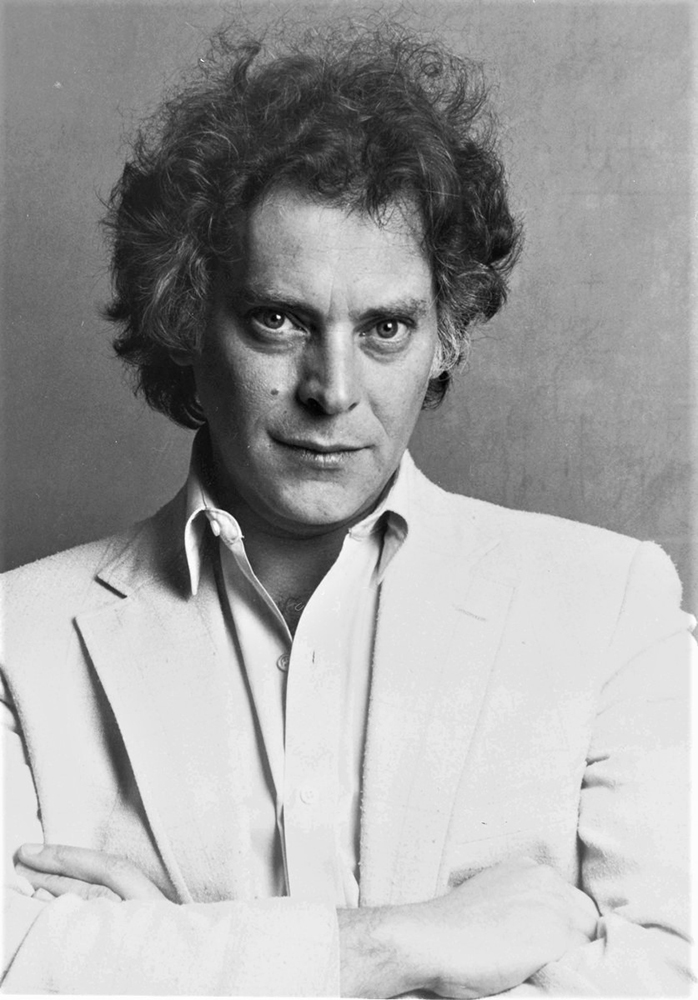

Two decades later, in the mid-1970s, came the Lab’s second major contribution to nonlinear science. That’s when a young theorist named Mitchell Feigenbaum left Virginia for Los Alamos. Soon after arriving, Feigenbaum began picking apart another nonlinear system, one that described how the state of a system at one moment determines its state in the next.

Feigenbaum was interested in systems governed by feedback, where outputs feed back into inputs. Such models appear everywhere, from population dynamics to electrical circuits. His questions were fundamental: when does orderly behavior break down, and how does regularity give way to irregular, unpredictable behavior? Is the transition smooth, or does it follow a deeper pattern? Famously too impatient to wait for time on the Lab’s supercomputers, Feigenbaum chased his intuition about chaos with a pencil and a programmable calculator.

Chaos, in the scientific sense, isn’t randomness or disorder. It is deterministic unpredictability. As many nonlinear systems are pushed harder, they don’t slide smoothly into chaos. Instead, their regular behavior fractures in stages. The process, called period doubling, describes how a system that once repeated every cycle begins oscillating between two repeating states, then four, then eight, and so on. Feigenbaum noticed that each successive doubling occurred after a change in conditions about 4.67 times smaller than the one before it. Practically speaking, this meant that in short order, infinite bifurcations were squeezed into a finite range of conditions, making long-term prediction impossible.

“I called my parents that evening and told them that I had discovered something truly remarkable that, when I had understood it, would make me a famous man,” Feigenbaum later recalled. He did indeed become famous in certain scientific circles. “The behavior of a wide class of nonlinear systems was governed by the same numbers.” From tornado formation to dripping faucets, Feigenbaum had uncovered a universal rule that governs how systems march toward chaos.

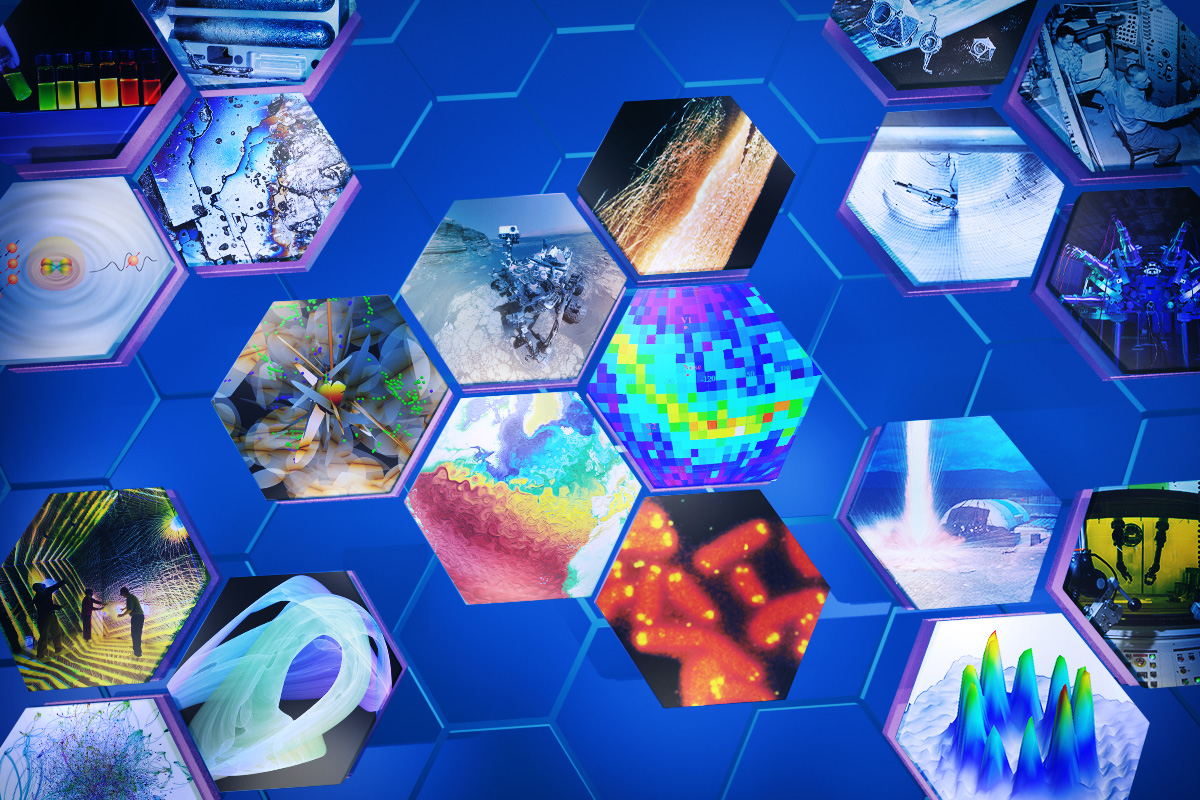

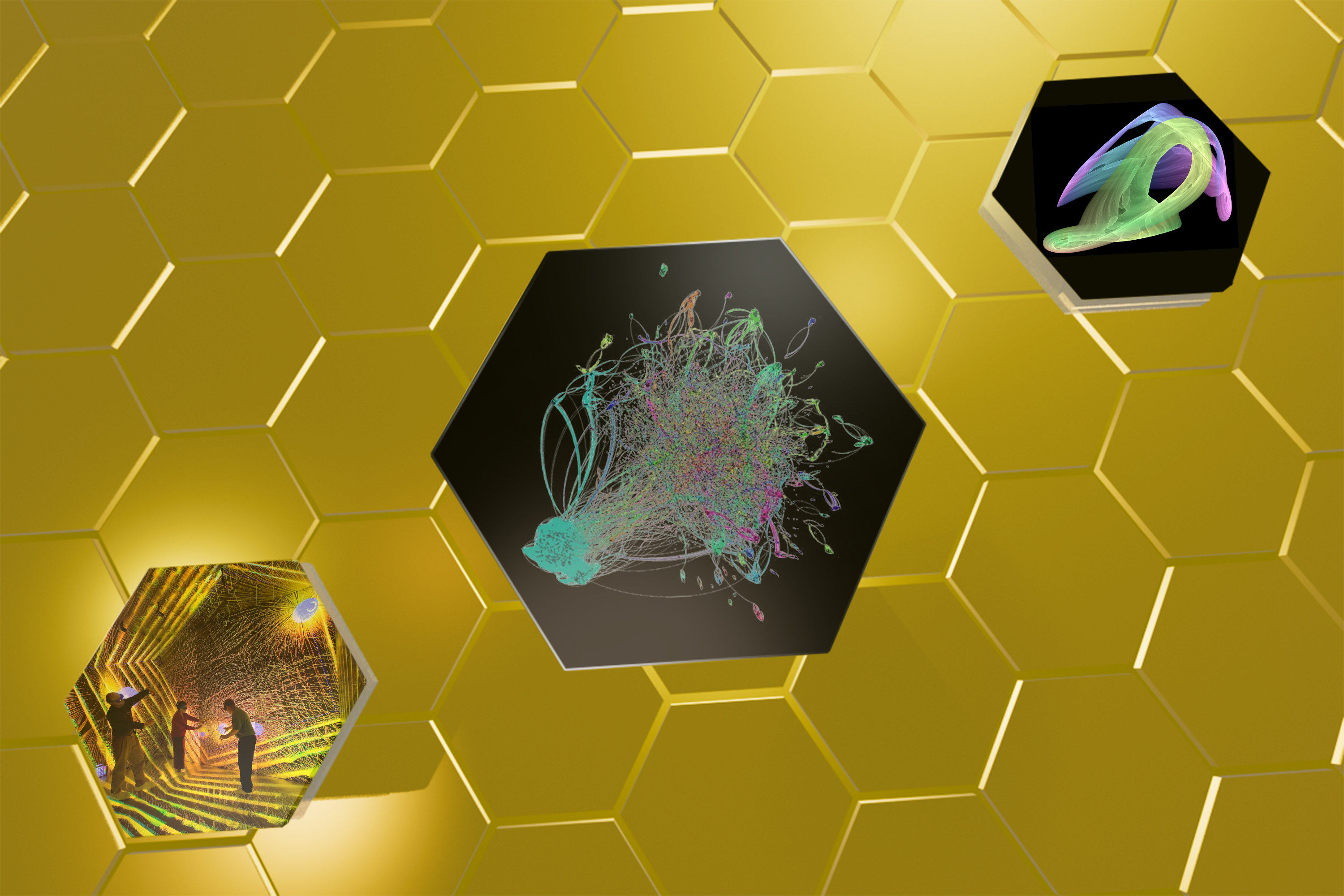

By 1980, Los Alamos was well known for its contribution to the field of nonlinear dynamics and founded the Center for Nonlinear Studies, the first institute of its kind. In the decades since, researchers there have shown that the same nonlinear mathematics appear in shockwave propagation, material failure, ocean-atmosphere coupling, earthquake rupture, and astrophysical explosions. “Once you recognize the mathematics,” Saxena says, “the connections become obvious. Everything is connected.”

“I called my parents that evening and told them that I had discovered something truly remarkable that, when I had understood it, would make me a famous man.”

Saxena and others, are applying nonlinear ideas back to the field’s computational roots through studies of quantum materials. Their work focuses on designing topological materials that could enable new kinds of quantum conductors, sensors, and diagnostics—tools that could push computational science beyond the limits of classical models. If realized, these materials could form the basis of quantum computers designed to tackle problems classical computers struggle to represent at all, like the complex nonlinear systems that Fermi, Pasta, Ulam, and Tsingou first wrestled with 70 years ago.

“Human creativity, intuition, and the scientific process remain our best tools for applying nonlinear ideas to the most complex problems we face,” says Saxena.

People Also Ask:

What is chaos theory? Chaos theory is the study of systems that follow precise rules but behave in unpredictable ways. In chaotic systems, tiny differences in starting conditions can grow rapidly over time, making long-term prediction impossible even though the underlying physics is fully deterministic. This sensitivity is often described by the “butterfly effect,” where a small disturbance can lead to large consequences elsewhere.

Chaos theory does not describe randomness or disorder. Instead, it reveals hidden structure and patterns in complex systems, showing that the transition from orderly behavior to unpredictability often follows universal mathematical rules. These ideas are central to understanding weather, turbulent flows, heart rhythms, ecosystems, and many other natural and engineered systems.