From Monte Carlo to Exascale

How Los Alamos built the algorithms that changed science and defined an era

- Eleanor Hutterer, Editor

“At the time of the Manhattan Project we could barely calculate a basic one-dimensional model of an implosion,” says Bill Priedhorsky, retired Los Alamos physicist and Laboratory Fellow. “The key questions were: Is it going to implode or come apart? Will it stay together long enough to get any nuclear yield?” These were incredibly hard questions, never before calculated, and the computers that Laboratory scientists relied on for answers to these questions were humans, doing the calculations by hand with mechanical calculators.

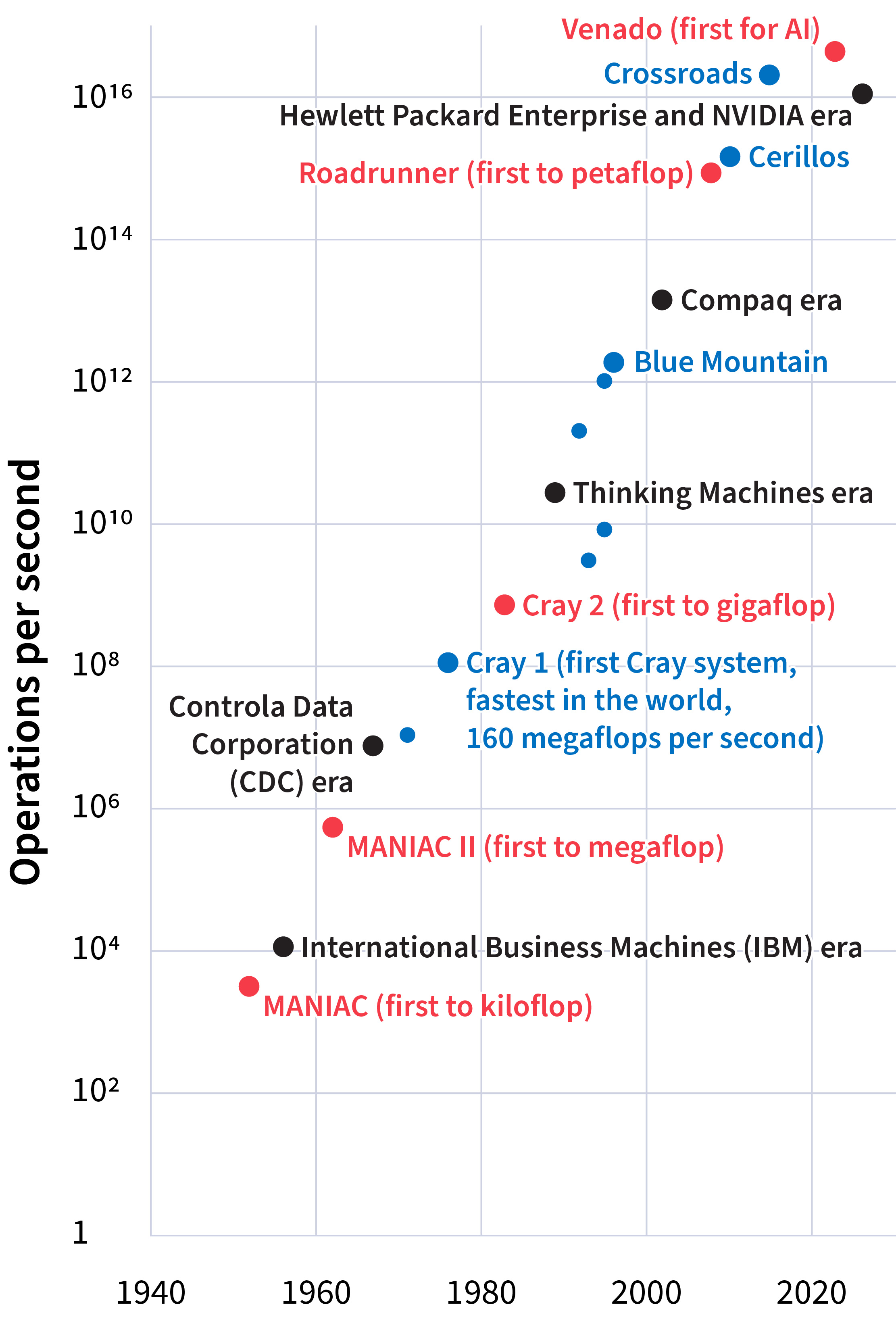

Today, the newest supercomputers at Los Alamos are Crossroads and Venado, which both perform on the petaflop scale (1015 operations per second). Advancing from mechanical calculators doing less than one operation per second to petaflop supercomputers is more than a 50 percent increase in computational power every year, compounded continuously for eight decades—which is staggering. But computing power is only as useful as the algorithms it drives. Here, too, Los Alamos has compounded continual growth.

The problems that the Laboratory tackles today—from modernizing the nuclear stockpile to modeling the Universe at its largest and smallest scales—involve the predictive modeling of complex systems. These kinds of models need sophisticated algorithms, usually custom built to address a specific question. The algorithms in turn require ever increasing raw computing power. For over 80 years, the scientific progress of Los Alamos has been driven by the interplay of problem complexity, algorithmic sophistication, and computing power, as each new challenge demands more advanced methods and the machines to meet it.

Monte Carlo Methods

Los Alamos led the way in applied algorithm development starting with the Monte Carlo method, first explored by Los Alamos physicist and mathematician Stanislaw Ulam in 1946. Ulam’s Monte Carlo method, named for the famous casino in Monaco, is a mathematical approach that uses repeated random sampling to model certain complex phenomena. Ulam devised it while thinking about a card game, but he and his Los Alamos colleagues quickly saw its utility for problems describing neutron diffusion within a nuclear weapon.

Through the 1950s and 1960s, the Lab’s fleet of fledgling electronic computers reached 104, then 106 operations per second. In parallel, a suite of Monte Carlo spinoff methods was written into codes for these computers to model increasingly complex neutron and photon transport problems. Chief among these descendants is the Metropolis algorithm, published in 1953, for sampling from complex probability distributions, and the general-purpose Monte Carlo N-Particle (MCNP) transport code, which Los Alamos scientists devised in 1977 and released to the public in 1983.

The Metropolis algorithm and MCNP, as well as other progeny of the original Monte Carlo method, are still widely used today in science, engineering, and even game theory. These algorithms have enormous influence in computation and machine learning methods and collectively comprise one of the most prominent and longest lasting algorithmic contributions by Los Alamos scientists.

For over 80 years, the scientific progress of Los Alamos has been driven by the interplay of problem complexity, algorithmic sophistication, and computing power.

The PARTISN (Parallel, Time-Dependent SN) code is another computational tool developed at Los Alamos and now used globally for a variety of applications. As computing power increased through the 1980s—advancing from about 108 to 1010 operations per second—new three-dimensional and time-dependent algorithms were developed and eventually unified into PARTISN. The tool was developed for the same type of particle transport questions as MCNP, but comes at the problem from a different angle: whereas MCNP is stochastic—stand in a room and throw a billion pieces of confetti, then watch where each piece lands and average the results—PARTISN is deterministic—predict and map the average density of confetti across the whole room at once. They are complementary approaches that, when used together for cross-verification, yield a more detailed result than either code can alone.

Multiscale Modeling

All of the solid materials that make up a nuclear weapon quickly turn into gas and plasma during detonation, so all but the earliest stages of detonation must be modeled as fluids. Los Alamos algorithms again led the way. Robert Richtmyer and Frank Harlow built much of the foundation for the field of computational fluid dynamics during their time at the Lab. Richtmyer led the Theoretical division in the late 1940s and early 1950s. He helped get Monte Carlo going on 1950s computers for particle transport questions, while at the same time pioneering algorithms for shock wave and fluid dynamics problems. Richtmyer’s theory of shock instabilities and turbulence lies at the heart of simulations of systems relevant to the modern Laboratory mission, like inertial confinement fusion.

Harlow, a theoretical physicist at the Lab in the 1950s, advanced Richtmyer’s ideas by creating innovative numerical techniques that allowed complex fluid flows, turbulence, and instabilities to be simulated computationally for the first time. This work, in conjunction with continual gains in computing power, moved fluid dynamics from the theoretical realm to one of large-scale computer modeling, driving major advances in weapons modeling capabilities, and later to simulations in astrophysics, plasma physics, and engineering.

From fluid dynamics, which applies to liquids, gases, and plasmas, Richtmyer focused in on hydrodynamics, which is specific to noncompressible liquids like water. He and Los Alamos luminaries John von Neumann and Rudolf Peierls created hydrodynamics models based on Lagrangian methods, which observe a fluid’s motion by following individual fluid units as they move through space and time. These methods, useful for studying interfaces and deformations, remain central to the Laboratory’s simulations in shock physics, hydrodynamic implosion, and plasma physics. In the 1970s, Lab scientists merged Lagrangian methods with Eulerian methods, which use a fixed observation point to watch fluid flow by, producing a powerful hybrid approach to hydrodynamics computation called ALE (Arbitrary Lagrangian-Eulerian) techniques.

In the 1990s, as Lab computers approached 1012 operations per second, Los Alamos scientist Arthur Voter developed Accelerated Molecular Dynamics (AMD) methods. Molecular dynamics describes the movements of atoms and molecules based on Isaac Newton’s laws of motion. But certain phenomena, like crystal rearrangement in fast-moving materials or radiation damage effects, occur very slowly from the point of view of a molecule in motion. Voter’s idea was to make state changes occur more rapidly in a simulation while preserving the correct kinetics. Voter’s AMD algorithms accelerated molecular dynamics simulations by orders of magnitude and remain an area of active development at Los Alamos.

Problem complexity, algorithmic novelty, and computing capacity at the Lab have advanced tremendously through mutual synergy, and all three continue to evolve together. The pursuit of ever more complex problems demands more sophisticated algorithms and computational platforms to solve them. Likewise, innovation in algorithm design and high-performance computing architectures expands the frontier of what questions can even be asked. This dynamic cycle—where complexity drives computation, computation drives insight, and insight feeds new complexity—is key to the Laboratory’s legacy of discovery.

People Also Ask:

- What are computer algorithms? Computer algorithms are sets of step-by-step instructions that tell a computer how to solve a specific problem or perform a task. Most algorithms use math, like algebra, calculus, and probability, to turn input data into answers to specific questions in computational physics, computer science, applied mathematics, and more.