Science Highlights, May 13, 2015

Awards and Recognition

Computer, Computational and Statistical Sciences

Performance modeling of a temperature accelerated molecular dynamics (AMD) code

Earth and Environmental Sciences

Multi-Year Underground Nuclear Explosion Signatures Experiment Project launched

High Performance Computing

Analytical models evaluate the costs of reliability in fast storage tiers

Materials Physics and Applications

Shining light on the exotic hidden order phase in a uranium compound

Materials Science and Technology

Neutron diffraction studies of high explosive powder during compaction

Science on the Roadmap to MaRIE

Neutron scattering of pore morphology in shale reveals pore structure and gas behavior

Awards and Recognition

Steven Girrens and Daniel Livescu elected ASME Fellows

Steve Girrens

The American Society of Mechanical Engineers (ASME) has chosen Steve Girrens (Associate Director for Engineering, ADE) and Daniel Livescu (Computational Physics and Methods, CCS-2) as Fellows. The ASME Committee of Past Presidents confers the Fellow grade of membership on worthy candidates to recognize their outstanding engineering achievements. Successful candidates must have 10 or more years of continuous corporate membership in ASME, have been responsible for significant engineering achievements, and have 10 or more years of active practice.

Steve Girrens is recognized for his leadership of the engineering activities associated with the Laboratory’s national security mission. The citation stated that he “has tirelessly promoted engineering innovation in our nation’s nuclear weapons program and other global security work, developed and sustained research collaborations with private industry, promoted professional society activities and championed new collaborative education and research programs with domestic and foreign university partners. Steve also volunteers significant time on various boards and organizations in the Los Alamos community.” He was selected in the ASME Fellow category of Industrial Leadership/Management. This category honors an executive or top-level manager who has achieved national or international prominence as a leader, innovator, and spokesman for his or her particular industry. The recipients must have a documented history of successful major accomplishments in management and have received recognition for significant engineering achievement.

Girrens received a PhD in mechanical engineering from Colorado State University and joined the Lab in 1979. He has diverse experience as a mechanical engineer and project manager developing and applying engineering technologies to solve problems in national security. Areas of expertise include mechanical engineering design and analysis, fracture and thermo-mechanics analysis, computational mechanics, structural seismic response, and project and personnel management. Girrens has technical organization management experience relevant to nuclear operations and facilities including safety basis development and implementation, operational readiness, conduct of operations, and compliance programs. Technical contact: Steven Girrens

Daniel Livescu

Daniel Livescu is an authority in the field of fluid mechanics and has made significant contributions to the LANL/DOE stewardship mission as a Principal Investigator for the NNSA Defense Science Programs. He received the award in the ASME Research and Development category. The Fellows are generally accepted as having made noteworthy invention, discovery or advancement in the state of the art as evidenced by publication of widely accepted materials, by receipt of major patents, or by having products or processes in the marketplace. The accomplishment can be a single contribution of extreme importance for an accumulation of smaller contributions that have led to the development of a body of knowledge in a field of engineering practice.

Livescu received a PhD in Mechanical and Aerospace Engineering from the University of Buffalo and joined the Laboratory in 2001. He is a world leader on direct-numerical simulation of turbulence and large-scale flow computations. He has led numerous open science proposals, including on Lawrence Livermore National Laboratory’s Dawn and Sequoia supercomputers, and LANL’s Roadrunner. The Roadrunner proposal resulted in the first successful implementation of a large fluid dynamics code on the Cell architecture. Livescu has performed the largest simulations to date of various turbulent flows, approaching or exceeding the parameters achieved in typical experiments. The simulations have revealed new or unexpected physics and helped to develop LANL’s turbulence models. Livescu has mentored 11 postdocs and 10 Ph.D. students (as thesis co-advisor) over the last decade. Many of them now hold faculty positions at prestigious universities or are Laboratory staff members. Technical contact: Daniel Livescu

The American Society of Mechanical Engineers promotes the art, science and practice of multidisciplinary engineering and allied sciences around the globe. The society includes more than 140,000 members in 151 countries. ASME codes and standards, publications, conferences, continuing education and professional development programs provide a foundation for advancing technical knowledge and a safer world.

Brian Williams named American Statistical Association Fellow

Brian Williams

Brian Williams (Statistical Sciences, CCS-6) has been elected a Fellow of the American Statistical Association (ASA). The Fellow Award is a distinction reserved for less than 1/3 of one percent of the ASA membership. Williams is recognized for: 1) fundamental methodological contributions to the statistical design of experiments involving computer simulators and the analysis of data from such experiments including uncertainty quantification, 2) excellence in leadership of uncertainty quantification in critical federal programs, 3) excellence in collaborative research, and 4) service to the ASA. He will be inducted as an ASA Fellow at a ceremony at the 2015 Joint Statistical Meetings in Seattle, WA in August.

Williams earned a PhD in statistics from The Ohio State University. He worked at the RAND Corporation before joining the Laboratory in 2003. His research interests include experimental design, computer experiments, Bayesian inference, spatial statistics, and statistical computing. Williams contributes to the development and implementation of statistical methods for the design and analysis of computer experiments, focusing on the technical areas of sequential optimization, global sensitivity analysis, calibration, predictive maturity assessment and rare event inference for computer models. He is developing technology for efficient Bayesian experimental design. Williams coauthored a book entitled The Design and Analysis of Computer Experiments with Thomas J. Santner and William I. Notz of The Ohio State University. Awards include: 1) a Los Alamos Achievement Award for his leadership role in the Consortium for Advanced Simulation of Light Water Reactors (CASL) Program, serving as Deputy Lead of the Validation and Modeling Applications Focus Area; 2) a DOE/NNSA Defense Programs Award of Excellence for “significant achievements in advancing Quantification of Margins and Uncertainty (QMU),” supporting QMU analyses for certification of the FY07 W76 Life Extension Program and the W88 Major Assembly Release; and 3) a Distinguished Performance Award for his work supporting uncertainty quantification projects for the Advanced Simulation and Computing Primary Verification and Validation Assessment Team. Technical contact: Brian Williams

Christopher Lee chosen for DOE Office of Science Early Career Award

Christopher Lee

The DOE Office of Science, Office of Nuclear Physics has selected Christopher Lee (Nuclear and Particle Physics, Astrophysics and Cosmology, T-2) for a DOE Early Career Award. The program is designed to bolster the nation’s scientific workforce by providing support to exceptional researchers during the crucial early career years, when many scientists do their most formative work, and stimulate research careers in the disciplines supported by the Office of Science. Awardees based at DOE national labs receive financial assistance for research expenses. Lee’s proposal, “Precision Probes of the Strong Interaction,” will develop high-precision theory to determine the structure of nucleons in terms of their constituent quarks and gluons and the strength of the force between them.

Lee earned a PhD in physics from the California Institute of Technology. He has been a postdoctoral researcher at the University of Washington, the University of California at Berkeley, and Massachusetts Institute of Technology. He joined the Laboratory in 2012 as a staff scientist and has pursued research on effective field theories of the strong interaction probed in high-energy particle collisions and on the origin of matter in the early universe. Lee’s awards include: National Science Foundation Graduate Fellowship, National Defense Science and Engineering Graduate Fellowship, and a finalist for the American Physical Society’s Leroy Apker Award. He has been a member of the Laboratory Directed Research and Development NPAC Category Proposal Review Committee since 2013, and the chairman of that committee since 2015. Technical contact: Christopher Lee

Chemistry

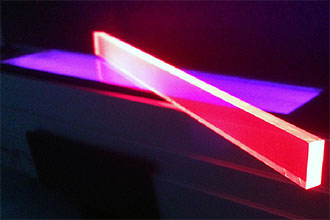

Conference celebrates 20 years of quantum dots at Los Alamos

Photo. Quantum dot luminescent solar concentrator devices under ultraviolet illumination.

The Lab’s Center for Applied Solar Photophysics (CASP) helped organize a conference celebrating 20 years of research into quantum dots. The “20 Years of Quantum Dots at Los Alamos” conference brought together researchers from around the world to reflect on this history of advancements in colloidal quantum dots, examine the very latest developments in the field, and discuss the next steps toward the future of these materials. The New Mexico Consortium hosted the conference. The program committee included several past and present members of the Chemistry Division’s Nanotechnology and Advanced Spectroscopy (NanoTech) Team at the Lab.

Quantum dots are tiny particles of semiconductor matter that are a few nanometers across. The NanoTech team’s research focuses on colloidal or nanocrystal quantum dots, which are fabricated via colloidal chemistry. Due to their size-controlled electronic properties and chemical flexibility, quantum dots can be viewed as “artificial atoms” that could be engineered to address a specific physical problem or application. Researchers have driven the evolution of the dots from a subject of laboratory curiosity to a versatile class of materials exploited in a wide range of emerging technologies such as e-book readers and television sets.

Figure 1. Timeline of quantum dot research at Los Alamos National Laboratory.

Victor Klimov (Physical Chemistry and Applied Spectroscopy, C-PCS) is the LANL NanoTech team’s leader and the founder of the Laboratory’s quantum dot program. He gave a special introductory address at the conference with his personal perspective on the field’s evolution. Over the past two decades, quantum dot research has evolved from doped, colored glasses into a wide-ranging program spanning different areas of quantum dot science from synthesis and spectroscopy to theory and devices.

Los Alamos has been at the cutting edge of quantum dot research since its inception. The LANL team’s research has revealed many unexpected and unusual optical and physical properties of quantum dots, showing that they behave quite differently than bulk solids for capturing photons or emitting light. The Lab’s quantum dot team also has been responsible for several important advances in the area of quantum dot light emitting diodes (LEDs). A 2004 Nature paper reported an elegant approach to activating light emission from the quantum dots using “wireless” excitation via exciton transfer from a proximal quantum well. Moreover, the NanoTech team made the first practical demonstration of a new all-inorganic quantum dot LED architecture, which holds promise for the realization of robust and durable devices with highly stable performance. Recently, the researchers successfully exploited the idea of “interface engineering” to alleviate the problem of efficiency roll-off at high currents in quantum dot LEDs. LANL also discovered carrier multiplication in quantum dots, the process whereby absorption of a single photon produces multiple electron-hole pairs. The phenomenon could potentially boost the efficiency of solar-energy conversion in both photovoltaics and generation of solar fuels. The pioneering work of the Los Alamos team transformed carrier multiplication from a theoretical concept for exceeding fundamental limits in solar-energy conversion into a technological reality. Technical contact: Victor Klimov

Computer, Computational and Statistical Sciences

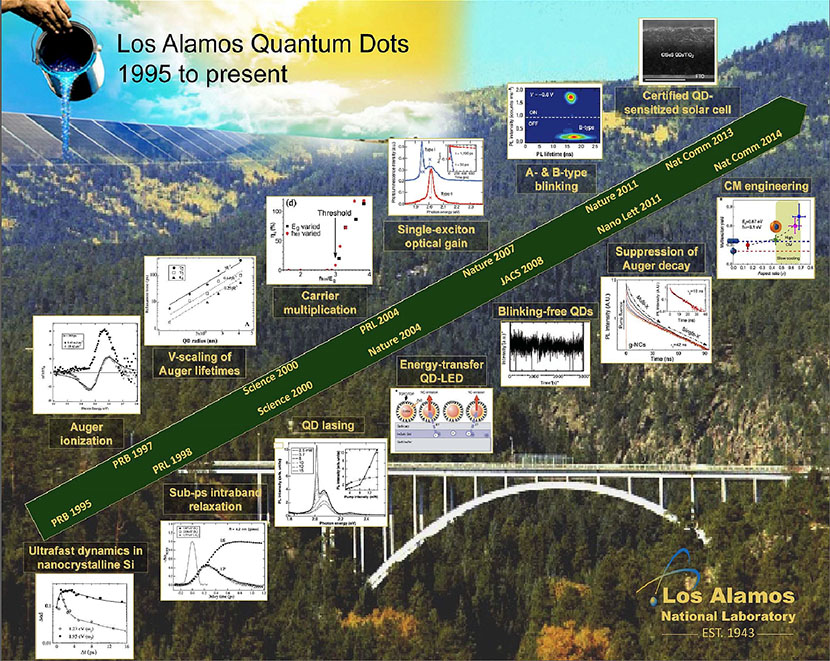

Performance modeling of a temperature accelerated molecular dynamics (AMD) code

Next-generation high-performance computing (HPC) will require more scalable and flexible performance prediction tools to evaluate software–hardware co-design choices relevant to scientific applications and hardware architectures. Lab researchers examined a new class of tools called “application simulators”. These parameterized fast-running proxies of large-scale scientific applications use parallel discrete event simulation. Parameterized choices for the algorithmic method and hardware options provide a rich space for design exploration to determine well-performing software–hardware combinations. The team demonstrated the approach by modeling the temperature-accelerated dynamics (TAD) method, an algorithmically complex and parameter-rich member of the accelerated molecular dynamics (AMD) family of molecular dynamics methods. The work captures the essence of the TAD application as a TADSim simulator without the computational expense and resource usage of the full code.

A parameterized application simulator models the key stages of an application as discrete events while abstracting time-intensive parts or kernels of the application. The logic of an application or pseudocode is simulated, including loops, control flow, and termination conditions, similar to a state machine. Instrumentation is available for the collection of performance metrics. The software and hardware parameters specified in the simulator define the hardware and software design spaces. The application simulator approach is most useful for performance analysis of applications that do not have predictable progression prior to runtime. As high performance computing asynchronous programming models become more commonplace, parallel discrete event simulation performance prediction becomes an increasingly attractive choice. This approach is especially powerful in cases where the runtimes of certain sections of the codes are not constant but can vary significantly from one case to the next (e.g., if a nonlinear problem needs to be solved, or when the stop time of some procedure is a random variable).

Figure 2. (Left): Atomistic system used for the validation of TADSim, corresponding to an adatom on a silver (100) surface. The moving atoms are blue, the nonmoving atoms are black, and the adatom is shown in red. The adatom can hop to an adjacent binding site or perform a two-atom exchange to a next-nearest- neighbor site. A large number of higher-barrier processes are also available to this system. (Right): TADSim prediction of computational boost is shown as a function of THigh for different core-counts for a silver system (TLow = 300K)

The accelerated molecular dynamics method of temperature-accelerated dynamics illustrates this application simulation concept. TAD is an algorithm for reaching long time scales in molecular dynamics simulations. For most materials, the dynamical evolution on these longer time scales is characterized by infrequent events, in which the system makes occasional transitions from one state to another. An example is the jump of a vacancy in a solid or an atom on a surface. Much more complex events, sometimes involving many atoms, also occur. In a standard molecular dynamics simulation, this often means that considerable computing time can be invested without observing any significant microstructural change in the material. The AMD methods, of which TAD is one, exploit this infrequency characteristic to reach much longer times than would have been possible with molecular dynamics alone. In TAD, a system is advanced from state to state at a temperature TLow, where transitions are very rare, using information from simulations at a higher temperature THigh, where the dynamics proceeds much more rapidly. The essence of TAD is to statistically determine which of the transitions observed at THigh should have occurred first at TLow, in order to obtain proper dynamics. The process of advancing to a new state is stochastic, and follows from a number of different computational tasks (thermalization, running molecular dynamics, transition checks, etc.), each of which can take variable amounts of time to complete. Because of this algorithmic complexity, the number of parameters in the method, and the large number of ways the different steps can be carried out, predicting and optimizing the performance of TAD is challenging.

The team used TADSim to quickly characterize the runtime performance and algorithmic behavior for the otherwise long-running simulation code. Validation against the actual TAD code showed close agreement in force calls and computational boost for the evolution of an example physical system, a silver adatom (an extra isolated atom) on a silver (100) surface (Figure 2 left). Figure 2 (right) shows TADSim’s prediction of computational boost as a function of THigh for increasing core-count. The flexibility of the approach enabled the prediction of performance for new algorithm extensions, such as speculative spawning of the compute-bound stages, without having to implement such a method in the TAD codebase. Focused parameter scans allowed studies of algorithm parameter choices over far more scenarios than would have been possible with the actual simulation. This led to interesting performance-related insights and suggested extensions: 1) the optimal high temperature THigh decreases with increasing TLow, 2) spawning of certain tasks on different processors can be advantageous given a significant core-count budget, 3) frequent transition checks improve performance in large core-count situations, but prove detrimental in limited-core situations, and 4) a high value for high temperature THigh increases the potential speedup, while a value that is too high degrades performance by introducing excessive overhead. These results add value for future method development and physics research in molecular dynamics.

Reference: “TADSim: Discrete Event-based Performance Prediction for Temperature Accelerated Dynamics, ACM Transactions on Modeling and Computer Simulation (TOMACS),” Volume 25, Issue 3, Article 15, April 2015; doi: 10.1145/2699715. http://dl.acm.org/citation.cfm?id=2699715

Authors include: Susan Mniszewski and Stephan Eidenbenz (Information Sciences, CCS-3), Christoph Junghans (Applied Computer Science, CCS-7), Arthur Voter and Danny Perez (Physics and Chemistry of Materials, T-1).

Laboratory Directed Research and Development (LDRD) and a LANL Director’s postdoctoral fellowship sponsored the work, and the Institutional Computing Program at the Lab provided use of their high performance computing cluster resources. The research supported the Lab’s Energy Security mission area and the Information, Science, and Technology science pillars. Technical contacts: Susan Mniszewski and Arthur Voter

Earth and Environmental Sciences

Multi-Year Underground Nuclear Explosion Signatures Experiment Project launched

Figure 3. (Left): Micrograph of fractures created as part of the material and fracture properties work. (Right): A digital elevation model created this 1 cm resolution hillshade as part of the change detection work.

The Underground Nuclear Explosion Signatures Experiment (UNESE) project addresses research and development efforts associated with nuclear explosion monitoring and verification and nuclear nonproliferation. The UNESE project will be conducted at the Nevada National Security Site (NNSS) to study legacy/existing explosion sites. It aims to address the following objectives:

- Advance capabilities in underground nuclear explosion (UNE) detection, site location, and identification.

- Develop and use Nevada National Security Site -based test beds to examine physics-based hypotheses and develop new modeling capabilities for underground nuclear explosion signatures.

- Advance existing technologies and develop new technologies for monitoring and verification.

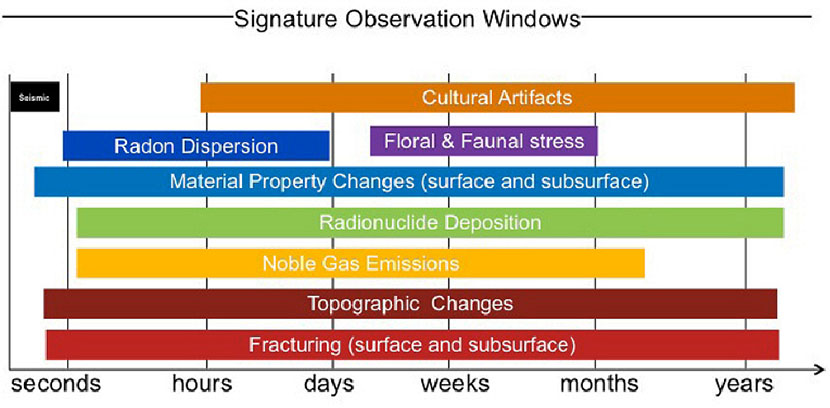

Underground nuclear explosions typically exhibit two categories of science signatures: radio-chemical (noble gases and radioparticulates emitted from underground nuclear explosions) and physical (visible and/or physically observable features at the surface or subsurface formed from underground nuclear explosions). (UNESE does not include the seismic time frame because seismic occurs immediately after an underground explosion).

Figure 4. Signature observation windows for underground nuclear explosions. UNESE will assess each of the above signatures except for seismic. Each signature has a range of time (from seconds to years) in which it can be detected and observed.

These signatures and observables are expressed over a wide range of time scales. Investigations of these signatures, particularly how, when, and where the signatures developed, are the primary goal of the UNESE project. The large variation in signatures requires extensive collaboration between groups, divisions, and laboratories.

Aviva Sussman (Geophysics, EES-17) is the LANL Point of Contact for UNESE. Sussman co-wrote the Science Plan, solicited Laboratory proposals, facilitated proposal review efforts, and co-produced the multi-organizational UNESE 2015 Life Cycle Plan. DOE NA-22 approved the plan in April. The

UNESE 2015 Life Cycle Plan will bring LANL $5.6 million over 3.5 years. Researchers from Earth System Observations (EES-14), Computational Earth Science (EES-16), EES-17, Subatomic Physics (P-25), and Materials Science in Radiation and Dynamics Extremes (MST-8) support this project as principal investigators and subject matter experts. More than 30 technical participants gathered in Las Vegas, NV in May to officially kick off the start of the UNESE project. The meeting reviewed prior work, and each principal investigator gave an overview of their funded FY15 and FY16 work. The research supports the Lab’s Global Security mission area and the Science of Signatures and Information, Science, and Technology science pillars through development of methods to investigate nuclear explosion monitoring, verification, and nuclear nonproliferation. Technical contact: Aviva Sussman

High Performance Computing

Analytical models evaluate the costs of reliability in fast storage tiers

Los Alamos researchers and collaborators are developing analytical models that guide the design and deployment of storage acceleration tiers. The team developed the criteria under which a storage acceleration tier must be reliable: when storing non-deterministic data, or when coherent failure detection is impossible. Based on that analysis, the scientists discovered that the burst buffer storage acceleration tier deployed at the Laboratory is not required to be reliable. A burst buffer is a collection of fast solid-state storage devices that provide faster performance than traditional hard disks, but at great expense, and with lower data capacity than traditional storage device.

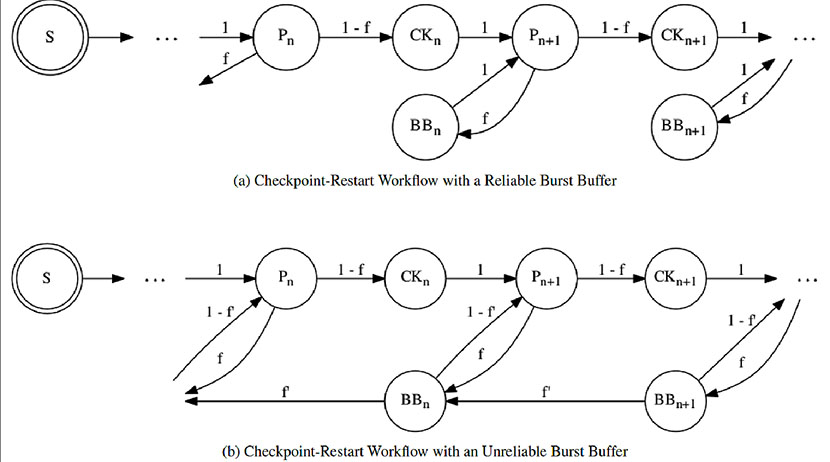

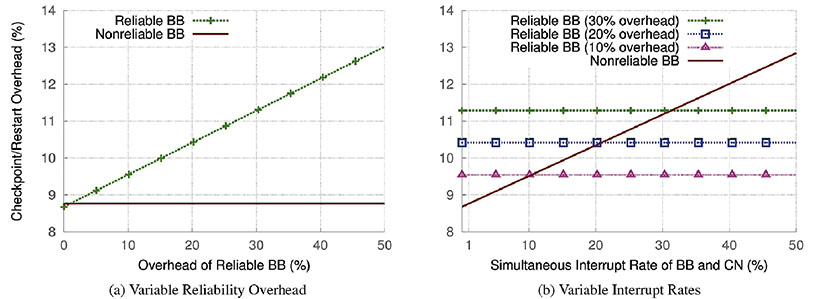

The researchers developed an analytical model comparing the system bandwidth of a reliable burst buffer versus the bandwidth of an unreliable burst buffer. Their analysis demonstrated that unless the performance loss associated with adding reliability to the burst buffer is less than 2%, unreliable burst buffers are preferable.

Figure 5. Typical high performance computing workflow. These Markov diagrams show a typical checkpoint-restart workflow for both a reliable and an unreliable burst buffer. A checkpoint is a snapshot of the current state of a computer application. When the application is interrupted due to a failure (e.g. an unexpected power outage), the checkpoint can be used to restart the program from the point where the checkpoint was collected, rather than restarting from the very beginning of the application execution. Pn represents a compute phase, CKn represents writing a checkpoint, and recovery/restart is represented with BBn. f and f' are the failure probabilities for the compute nodes and burst buffers, respectively. For clarity, the authors did not show a failure possibility during the checkpoint phase; note this does not affect the analysis.

In leadership-class computer systems, computational power has grown much faster than the rate at which data can be stored safely. This results in an I/O bottleneck that leads to fast processors laying idle while waiting for data to be safely stored in the storage system. Protection against data loss (i.e. providing reliability) has long been the hallmark of storage system design. Burst buffers have emerged as a way to build a small fast storage acceleration tier that prevents computational jobs from idling excessively. Because burst buffers are a novel concept, computer systems designers and programmers are uncertain of how to best construct a useful burst buffer. The Laboratory research helps engineers build better burst buffers by demonstrating the exact relationships between burst buffer reliability and performance loss. This information enables the design of the most capable storage acceleration tiers. The new analysis demonstrates that in some circumstances, computational progress can be improved by reducing the reliability of portions of the storage system. Unless the performance loss associated with making a burst buffer reliable is less than 2%, it is preferable to use an unreliable storage acceleration tier as a burst buffer.

Figure 6. Comparison of Reliable and Nonreliable Burst Buffers. These graphs show the equations developed within this paper to compare the checkpoint/restart overhead imposed on applications when using a reliable or an unreliable burst buffer architectures. Graph 6a shows that a reliable burst buffer outperforms an unreliable burst buffer only when the performance costs of parity overhead are less than 2%. Parity overhead is protection data stored with a data set that ensures that if part of the data is lost (due to a storage media failure) the original data can still be reconstructed from the parity. This graph uses the projected exascale interrupt rates to fix the frequency of correlated compute node and burst buffer interruptions at one percent. Graph 6b explores the impact of further increasing the number of simultaneous failures in the unreliable burst buffer and the compute partition along the x-axis. Three reference lines are shown for reliable burst buffers with 10, 20, and 30 percent parity overheads. As long as the rate of simultaneous failures of both the burst buffer and compute partition are less than 10%, an unreliable burst buffer provides better compute throughout than even low-overhead reliable burst buffers.

Reference: “On the Non-Suitability of Non-Volatility,” HotStorage ’15, The 7th Usenix Workshop on Hot Topics in Storage and File Systems, Santa Clara, CA. Researchers include Brad Settlemyer and Nathan DeBardeleben (System Integration, HPC-5) and John Bent, Sorin Faibish, Uday Gupta, Dennis Ting, and Percy Tzelnic (EMC Corporation).

The NNSA Advanced Simulation and Computing program funded the work, which supports the Lab’s Nuclear Deterrence mission area and the Information, Science, and Technology science pillar through the development of advanced computing capabilities in support of nuclear weapons stockpile stewardship. Technical contact: Brad Settlemyer

Materials Physics and Applications

Shining light on the exotic hidden order phase in a uranium compound

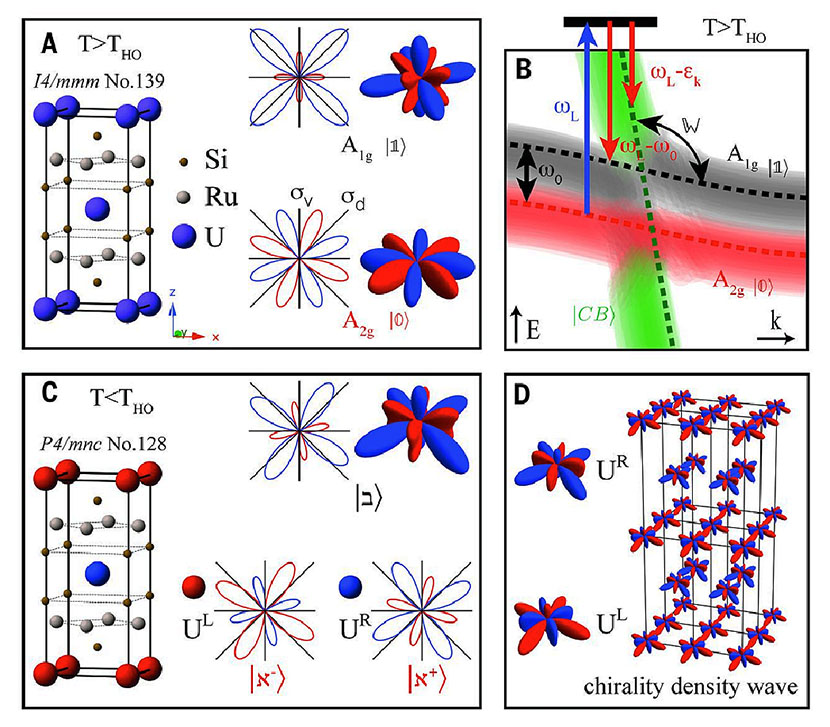

Interactions of actinide 5f electrons with mobile conduction electrons in a solid lead to a variety of exotic states of matter. The “hidden order” (HO) phase in the uranium-based intermetallic compound URu2Si2 is one of these unusual states that has puzzled physicists for over 30 years. A research team including the Laboratory has made strides in explaining this mystery using sophisticated Raman spectroscopy on ultra-high purity samples produced at Los Alamos. The results further the understanding of emergent phenomena studied as part of the Laboratory’s scientific pursuit of controlled materials functionality, which is central to the materials strategy. The journal Science recently published the results online.

A change from one phase of matter into another one is characterized by the symmetries that are broken. A well-known example is the breaking of rotational symmetry that occurs when ice melts into water. A second-order phase transition is associated with the emergence of an “order parameter,” which describes the state with reduced symmetry. In the case of URu2Si2, this material enters into the hidden order phase below a temperature of 17.5 K, but the symmetry of the associated order parameter has remained ambiguous.

Researchers used the Czochralski method to grow URu2Si2 single crystals at Los Alamos and polarization-resolved Raman spectroscopy at Rutgers University to study them. The scientists measured the symmetry of the low energy excitations above and below the hidden order transition. The team determined that the hidden order breaks local vertical and diagonal reflection symmetries at the uranium sites. This phenomenon leads to uranium 5f electronic orbitals with distinct chiral properties, which order to a commensurate chirality density wave ground state.

Figure 7. Schematics of the local symmetry of the uranium 5f electronic states. The left- and right-handed uranium states, denoted by red and blue atoms, respectively, are staggered in the lattice. UL and UR denote the two non-equivalent uranium sites in the hidden order phase. The chirality density wave is illustrated in D.

Reference: “Chirality Density Wave of the “Hidden Order” Phase in URu2Si2” Science 347, 1339 (2015); doi: 10.1126/science.1259729. Rutgers University led the research. Authors include: Ryan Baumbach and Eric Bauer (Condensed Matter and Magnet Science, MPA-CMMS), H.-H. Kung, V. K. Thorsmølle, W.-L. Zhang, and K. Haule (Rutgers University); G. Blumberg (Rutgers University and National Institute of Chemical Physics and Biophysics, Estonia); and J. A. Mydosh (Leiden University, Netherlands).

The DOE, Office of Basic Energy Sciences, Division of Materials Sciences and Engineering sponsored the work at the Lab. The research supports the Laboratory’s Energy Security mission area and the Materials for the Future science pillar by advancing the understanding of emergent phenomena arising from strong correlations among electrons in solids. Technical contact: Eric Bauer

Materials Science and Technology

Neutron diffraction studies of high explosive powder during compaction

In work relevant to the Lab’s role as the design agency for extending the reliability and safety of B61 bombs that have exceeded their life period, LANL researchers have obtained experimental results about the high explosive TATB (2,4,6-triamino-1,3,5- trinitrobenzene). By collecting the first data on a scale useful to developing constitutive mesoscale models, the team will aid in benchmarking and improving modeling efforts that incorporate how TATB responds to temperature fluctuations and compaction. Literature reports on thermal dependence of TATB lattice parameters and crystal structure do not always agree, and in situ measurements of texture evolution during a pressing process have never been made. These data are critical for development of mesoscale models that can inform system-level models, and can be used to interpret and identify underlying mechanisms for other experimental findings.

Many explosives detonate when exposed to fire or a shock, and may exhibit partial reaction or incur significant damage when a charge falls on the ground. TATB is difficult to detonate by accident. Therefore, it is a prime choice for applications where extreme safety is required. However, TATB has undesirable properties; the most problematic is the anisotropy of the crystal. A detailed understanding of TATB’s response to temperature changes and the compaction process is of great importance to mitigate or at least accommodate the problem.

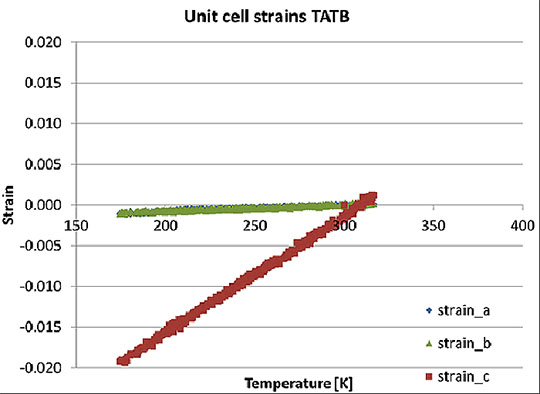

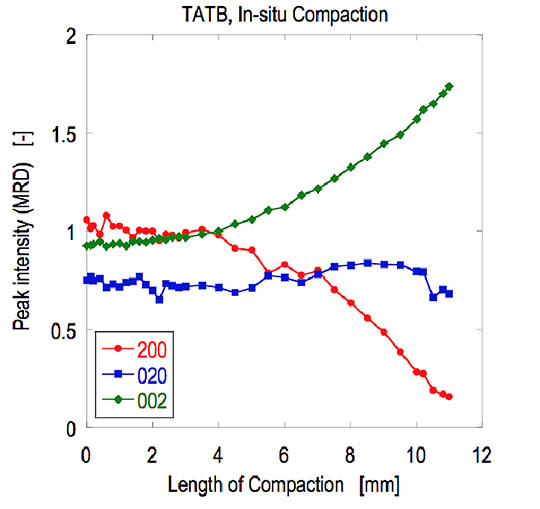

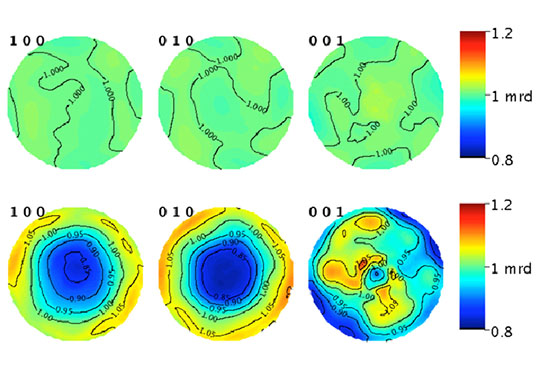

The crystal structure of TATB is triclinic, the lowest possible crystal symmetry. It expands about 10 times more on its crystallographic c-axis than on the a- and b-axes. When a pellet of compacted TATB is heated and cooled a few times, this anisotropic thermal expansion leads to a permanent change in shape and size of the bulk material, an effect called ratchet growth. The temperature change experienced outdoors over the course of a year is sufficient for this effect to happen. When the platelet-shaped crystals of TATB powder are compacted, the crystals become preferentially oriented due to their shape, similar to a deck of cards falling on the floor – it is very unlikely to see a card standing up. This preferred orientation, or texture, makes the thermal anisotropy even worse as more crystals are oriented with their c-axis parallel to the compaction direction, thus amplifying the effect of the thermal anisotrop

Researchers Don Brown, Bjorn Clausen, and Sven Vogel (Materials Science in Radiation and Dynamics Extremes, MST-8), John Yeager (Shock and Detonation Physics, M-9), and D. J. Luscher (Fluid Dynamics and Solid Mechanics, T-3) designed and conducted experiments to probe these TATB crystal effects on an atomic and microstructural level. The team used instruments at the Los Alamos Neutron Science Center [high-pressure/preferred orientation (HIPPO) neutron diffractometer and Spectrometer for Materials Research at Temperature and Stress (SMARTS)] to measure lattice parameters and orientations of the crystals as a function of temperature and compaction level. The work simulated the temperature changes and the compaction process experienced by a “real” component. The neutrons probed a large volume of crystals to enable diffraction on a quantity representative of the bulk material. Comparable experiments using a synchrotron x-ray source, for example, would have sampled a much smaller amount of material and so would require many more tests to gather equivalent information.

Figure 8. Relative change (strain) of the three crystallographic lattice parameters of TATB crystals as a function of temperature measured on the HIPPO neutron diffractometer.

The HIPPO neutron diffractometer measurements revealed the relative change of the lattice parameters of TATB powder as a function of temperature (Figure 8). Results from multiple heating and cooling cycles indicate that the unit cell dimensions do not change with the number of cycles. The temperature dependence of the dimensions strongly agrees with one particular literature report and somewhat disagrees with another, enabling the model developers to identify the correct data to use. The material is pseudo-hexagonal, causing overlap of the curves for a and b.

Figure 9. Relative change of the peak intensity during compaction of a TATB powder measured on the SMARTS diffractometer. The (200), (020), (002) reflections correspond to the a, b, and c crystallographic axes, respectively. “Length of Compaction” refers to the plunger location as it presses into TATB powder in a plunger-and-die setup. Higher length means a higher degree of compaction in the powder.

Figure 10. Preferred orientation of TATB crystals for loose powder (top) and a compacted pellet (bottom) as measured on HIPPO and displayed as pole figures for the a (100), b (010), and c (001) axes. The sample cylinder axis is out of the plane of the paper. The contours are in multiples of random orientation (mrd), i.e., a perfectly random orientation would be 1 in all directions. The powder shows completely random orientation, while the pellet shows strong (001) orientation.

The crystal orientations in a material are typically displayed as pole figures. A pole figure shows as a contour plot how likely a certain crystallographic direction (e.g. 100, 010, 001 on the labels of the three pole figures) is in a certain sample direction. Figure 10 reveals that the powder has no measurable preferred orientation of the crystals, whereas the compacted pellet has a preferred orientation. The texture of the pellet proves that in addition to anisotropy at the single crystal scale, anisotropy at the mesoscale to macroscale exists.

The data will inform a new mesoscale continuum model that a Laboratory team developed. The model relates the thermal expansion of polycrystal TATB specimens to their microstructural characteristics. LANL researchers will use the modified model to predict mesoscale effects in other geometries/experiments, which can be verified with further Lujan Center diffraction experiments in an iterative fashion.

Reference for the model: “Self-consistent Modeling of the Influence of Texture on Thermal Expansion in Polycrystalline TATB,” Modelling and Simulation in Materials Science and Engineering 22, 7 (2014); doi: 10.1088/0965-0393/22/7/075008. Authors include: D. J. Luscher (Fluid Dynamics and Solid Mechanics, T-3), M. A. Buechler and N. A. Miller (Advanced Engineering Analysis, W-13).

The work is an example of science on the roadmap to MaRIE, the Laboratory’s proposed experimental facility for control of time-dependent material performance. MaRIE’s combination of x-ray and neutron scattering methods would provide unprecedented, time-resolved access to structural properties of materials from atomic- to meso-scales.

The B61 Life Extension Program (Project Realization Team Lead Daniel Trujillo) and Campaign 1: Primary Assessment Technologies (Program Manager Stephen Sterbenz) funded the work, which supports the Laboratory’s Nuclear Deterrence mission area and Science of Signatures and Materials for the Future science pillars through lifetime extension research on the explosives TATB and PBX 9502. LANL, Sandia National Laboratories, the U.S. Air Force, and the Department of Defense use science-based R&D to certify the lifetime of each component and the functionality of the system as a whole. If a component is too old and cannot be recertified for a life extension, then it could be rebuilt as designed or completely redesigned. Technical contacts: Sven Vogel (neutron diffraction) and John Yeager (TATB).

Physics

Lab’s muon imaging system going live at Fukushima Daiichi

A muon imaging system pioneered at the Laboratory will be deployed at Japan’s Fukushima Daiichi power plant by the end of 2015. An earthquake and tsunami in 2011 severely damaged the plant, leading to concerns that molten nuclear fuel spread from the reactor core to the pressure and containment vessels. The goal of the deployment is to reveal the amount, condition, and location of the highly radioactive nuclear fuel remaining inside the reactors, without exposing workers to the high radiation fields inside the reactor facilities.

Muon radiography uses secondary particles called muons that are generated when naturally occurring cosmic rays collide with upper regions of Earth’s atmosphere. The muons create images of the objects that the particles penetrate. LANL researchers exploit multiple scattering of the muons in an object of interest to obtain 3-D images. Christopher Morris (Subatomic Physics, P-25) first developed this technique more than 10 years ago.

The Laboratory Threat Reduction Team in P-25, together with Toshiba Corporation and Decision Sciences International Corporation (DSIC), will image reactor unit No. 2 using cosmic muons. The Los Alamos technique will provide Tokyo Electric Power Company with a “map” for safe removal of nuclear fuel from the damaged plant.

Photo. A picture of the detector, currently mounted in horizontal mode, at the Toshiba facility in Yokohama where the detectors were assembled. (Courtesy of Mark Saltus, DSIC)

Photo. Los Alamos National Laboratory postdoctoral researcher Elena Guardincerri (right), and undergraduate research assistant Shelby Fellows (left) prepare a lead hemisphere inside a muon tomography machine.

Laboratory participants include: Jeffrey D. Bacon, J. Matthew Durham, Joseph M. Fabritius II, Elena Guardincerri, Christopher Morris, Kenie O. Plaud-Ramos, Daniel C. Poulson, Zhehui Wang, and Shelby Fellows (all P-25).

Muon tomography and development of its application at Fukushima were made possible in part through the Laboratory Directed Research and Development (LDRD) program. Work for Others funding from Toshiba Corporation and the Tokyo Electric Power Company sponsored other aspects of the research. The work supports the Laboratory’s Global Security mission area and the Science of Signatures science pillar. The Lab’s muon tomography technology is also deployed in locations around the world to help detect smuggled nuclear materials. Technical contact: Elena Guardincerri

Science on the Roadmap to MaRIE

Neutron scattering of pore morphology in shale reveals pore structure and gas behavior

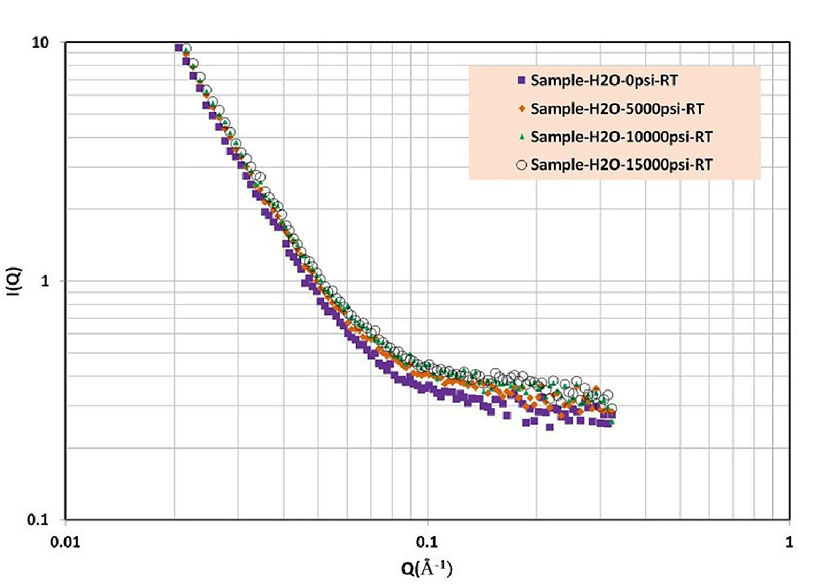

In an effort to maximize unconventional oil and gas recovery, Los Alamos researchers probed pore structure and water-methane fluid behavior in nanoporous shale rock at reservoir pressure and temperature conditions. The team used LQD, the small-angle neutron scattering (SANS) Low-Q Diffractometer, at the Los Alamos Neutron Science Center (LANSCE) for the studies. Their results reveal information key to understanding how nanopore structure determines the distribution of fluids in reservoirs and its impact on oil and gas recovery.

Photo. A sample of shale rock used in the research.

The team used a pressure sample environment capable of 414 MPa and 250°C, and a gas-fluid mixing apparatus to deliver fluid mixtures to the pressure cell. The researchers performed in situ SANS measurements at various elevated pressures up to 172 MPa and at temperature up to 66°C with and without the presence of injected fluid. They discovered that the pores in shale are highly ramified interconnected sheets, accessible to water and methane, which flatten with increasing pressure. This phenomenon squeezes out the fluid. The hydraulic fracturing process is designed to open cracks allowing the fluid to flow. This result may give insight into the distribution of fluid in situ correlated with pore size and how this distribution would change with hydraulic fracture.

The researchers plan to develop an instrument to examine the hydrocarbon flow behavior in nanoporous shale rocks under uniaxial stress. This representation of in situ reservoir conditions would provide the first experimental results on hydrocarbon phase behavior and flow properties in shale formations where nanopore size, geometry and connectivity are sensitive to pressure, strain, temperature, water content, and hydrocarbon species.

Figure 11. SANS measurements of shale at room temperature and various pressures reveal small length scale affects.

The study is preliminary to time-dependent nanoporous fluid flow studies, examples of science on the roadmap to MaRIE, the Laboratory’s proposed facility for time-dependent materials science at the mesoscale. With MaRIE, studies on time dependent in situ geomechanical and fluid flow with extremely high time and spatial resolution would be possible to emulate gas and oil extraction procedures.

Participants include: Mei Ding and Honwu Xu (Earth Systems Observations, EES-14), Erik Watkins (Materials Synthesis and Integrated Devices, MPA-11), Mel Borrego (LANSCE Weapons Physics P-27), Daniel Olds and Rex Hjelm (Materials Science in Radiation and Dynamics Extremes, MST-8).

The DOE’s Office of Fossil Energy through National Energy Technology Laboratory’s Strategic Center for Natural Gas and Oil (LANL program manager George Guthrie) sponsored the work. The research supports the Laboratory’s Energy Security mission area and Materials for the Future science pillar through insight into how to optimize gas and oil extraction. Technical contact: R. Hjelm